this post was submitted on 11 Sep 2025

863 points (96.2% liked)

Technology

85278 readers

4285 users here now

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

founded 3 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

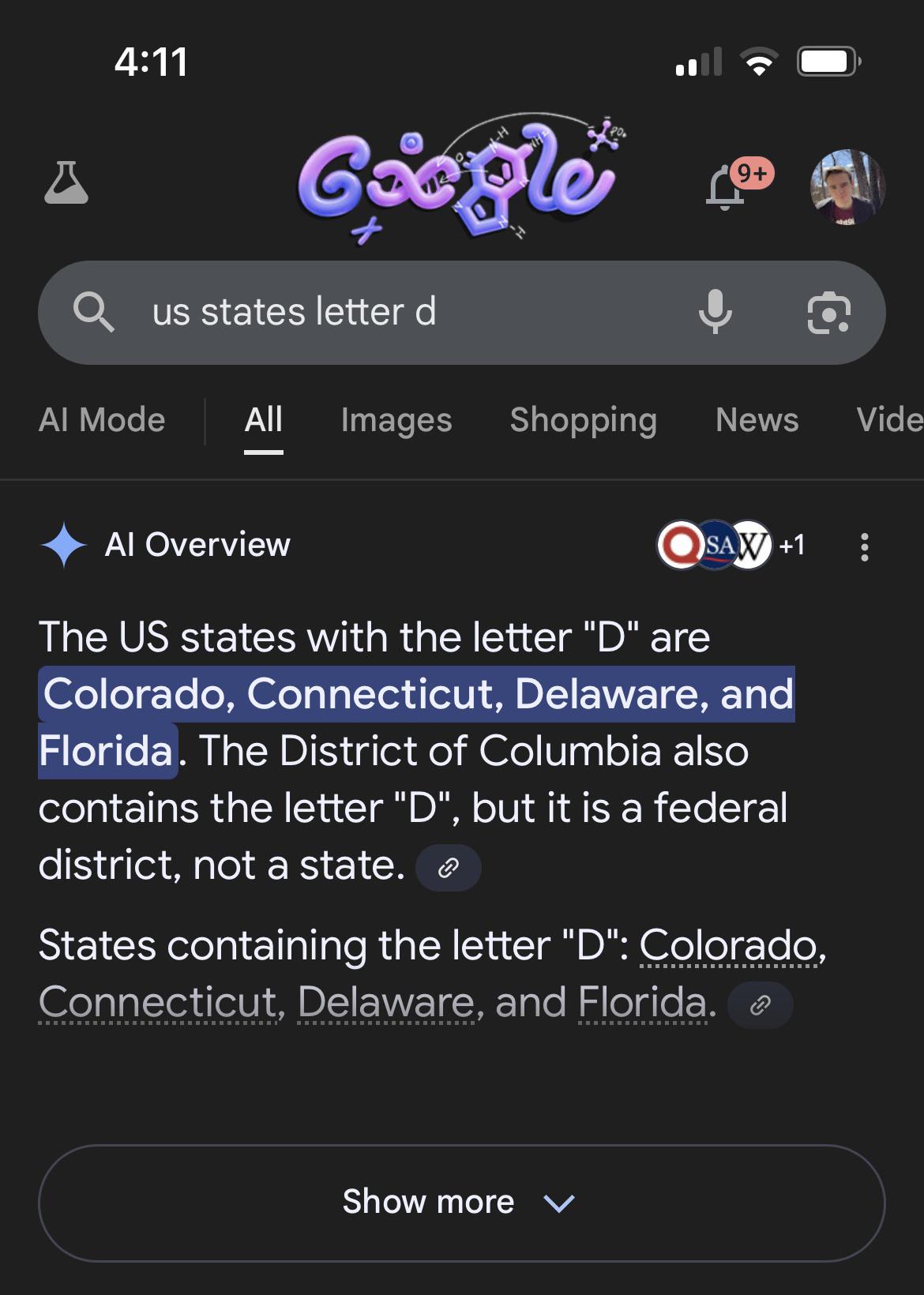

No, LLMs produce the most statistically likely (in their training data) token to follow a certain list of tokens (there's nothing remotely resembling reasoning going on in there, it's pure hard statistics, with some error and randomness thrown in), and there are probably a lot more lists where Colorado is followed by Connecticut than ones where it's followed by Delaware, so they're obviously going to be more likely to produce the former.

Moreover, there aren't going to be many texts listing the spelling of states (maybe transcripts of spelling bees?), so that information is unlikely to be in their training data, and they can't extrapolate because it's not really something they do and because they use words or parts of words as tokens, not letters, so they literally have no way of listing the letters of a word if said list is not in their training data (and, again, that's not something we tend to write, and if we did we wouldn't include d in Connecticut even if we were reading a misprint). Same with counting how many letters a word has, and stuff like that.