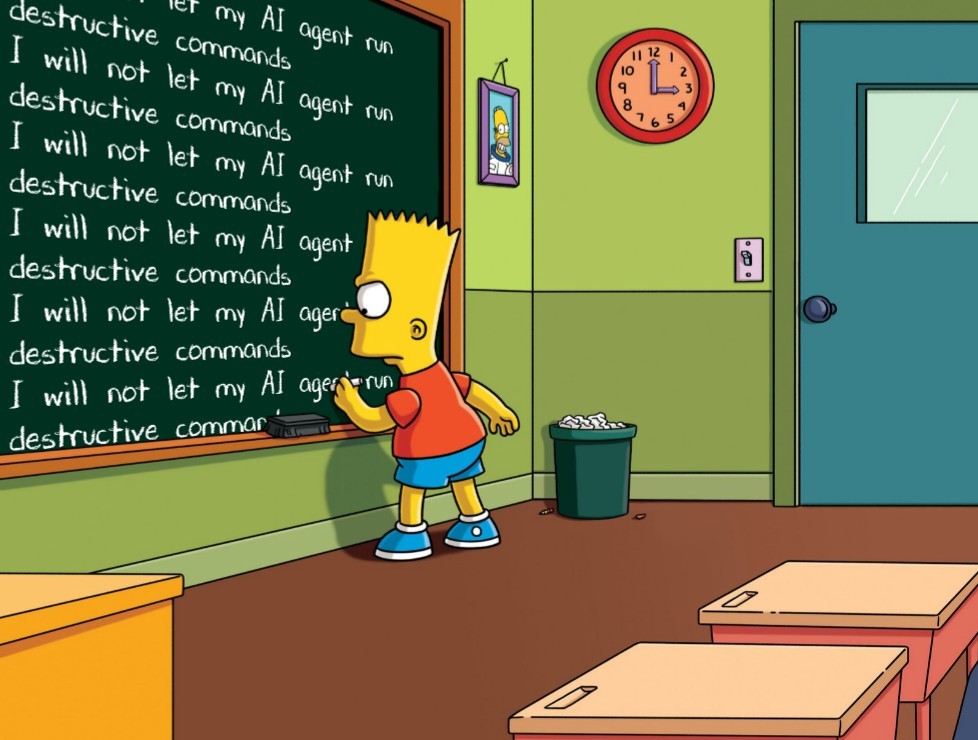

Ever hear of a backup?

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

How do you even achieve that? I have to coax it into correctly running the project locally.

i wonder which would be worse idea, letting llm to have full access to your critical systems and data, or letting random people from internet freely connect to them and expect them to help.

Lmao good.

What, is a requirement for Claude to work that you "sudo chmod -R 777 /" or something?

skill issue tbh

The only job AI is gonna take is the intern who fucks everything up.

It legitimately is squeezing out the entry level already and that is its own problem. Maybe it's good for some of us, in that people with experience will be needed for a long time as they prevent all these younger people from getting that experience, but it absolutely sucks for a whole bunch of people trying to make a career, and it will eventually suck for the economy as a whole. AI, whether it's ready or not, or will even ever be fully what the marketing people claim it is, is leading to a whole lot of shortsighted decisions that are hurting people.

Its funny because when I worked at places where I even had the rights to do something like this (exempting small companies where I was the multi hat guy but even there I made it so I had to go through hoops to do something like this) it makes me like. This is crazy.

Good. Serves them right.

3-2-1

And nothing of value was lost